|

5/29/2023 0 Comments Expandrive cant play media

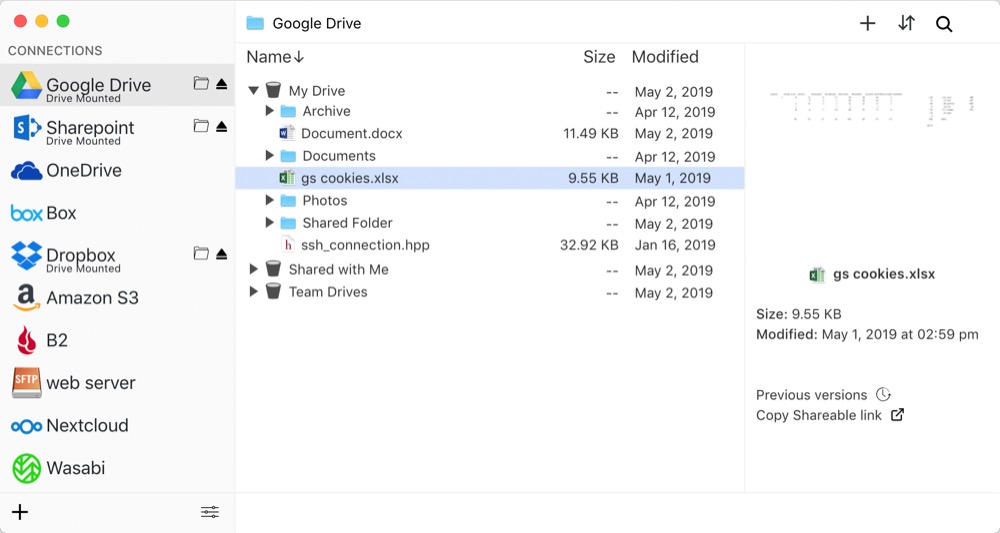

IDrive e2 now integrated with Duplicacy - Fast S3 Compatible Cloud storage Feature But if it is indeed iDrive, it might just not be suitable for your particular usecase. There are timestamps in there, so you can deduce what and when is happening. Try to run your operations with -d flag and see what you find. But it is not something that is really practical. different root folders on your iDrive) so that it splits /chunks folders. To do what you’re trying to do would require you splitting your storages as well (e.g. your source folders) into smaller groups won’t help as long as you backup all to the same storage (shared /chunks folder). It works, but is not a real solution as you will still need to list all chunks e.g. one file or folder) this will complete quickly (at least on a new storage), then you remove filtering and continue backing up as usual. So you can make an initial snapshot with a filter excluding everything except some very small subset of your files (e.g. Having said that, above is correct, as long as you have one completed backup into your snapshot, full chunk listing on backup won’t be happening. However, if iDrive takes significantly longer (order of magnitude) for listing directory, this might still be in play. So if your connection drop happens only once a day that should still be fine unless your scheduling it at the exact wrong time. This is with OneDrive backened, someone else reported similar listing times with Google Drive. This is not indexing BTW, indexing is listing all the files in your repository which should take minimal amount of time (the longest I’ve seen that taking is ~10 minutes with hundreds of thousands if not millions of files on a network drive).įor listing of chunks it is similar - one of my repositories is about 7TB+ at the moment (with default chunking), and it takes about half an hour to list all the chunks. This sounds like an issue with your storage (iDrive) rather than anything else - if it indeed fails on listing all the chunks phase. With Rclone, you can easily mount a remote storage and get at files more easily.

Instead, I use Rclone for movies/TV media, and use Duplicacy for everything else. Personally, I’d avoid using Duplicacy to backup such files altogether, as it adds such a big overhead for no real gain (no compression, no de-duplication, no need for versioning). Another way to mitigate this problem ( check and copy do this scanning phase, too) by re-initialising the backup storage with bigger chunks, resulting in fewer chunks, which may be more suitable for such large files anyway. threads 16 - if you aren’t already doing so. You might be able to improve the scan by using more threads, e.g. known chunks from the previous snapshot as a starting point, then a final existence check for each chunk before an upload. Incremental backups don’t do this part, as it uses a different process i.e. This phase has to be done to avoid re-uploading. You probably already have a large number of chunks uploaded - due to previously aborted backups - and it’s taking a long time to list them. Your issue seems to be related to the ListAllFiles() phase of an initial backup. What is my best bet here? Should I split up my movie directories further to allow Duplicacy easier indexing? I’ll be moving from 37Mb/s upload to 100Mb/s shortly - that might help too but optimally, I’d have a complete backup before moving. The indexing seems to be IOPS-limited, which I cannot change. I see around 2-4MB/s of reads due to Duplicacy on the drive with 100MB of RAM used and 0% of CPU used according to unRAID. The system is equipped with an i5 11600k and 32GB of RAM. I am running Duplicacy in a Docker container under unRAID on shucked WD Elements 12TB drives with read speeds of 100-190MB/s (depending on data location on the platter).

The directories in question range from 500GB to 5TB with 300 to 3500 files. The few seconds my connection drops due to this does not seem to influence uploads but when it happens during indexing, I think, the indexing fails with the error: ERROR LIST_FILES Failed to list the directory chunks/: RequestError: send request failed. I suspect it fails because of the nightly reset of my IP - a behaviour dictated by my ISP I cannot change.

Yet, I am still running into issues with Duplicacy taking a long time to index each library which then results in it failing when comparing the file list to the cloud. I split my libraries to reduce the individual size. I set Duplicacy up to back up my movies to iDrive e2.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed